Tony Bonnaire

CNRS AI Research Scientist

Institut d'Astrophysique Spatiale (Université Paris-Saclay)

About me

I am an interdisciplinary AI researcher hosted at Institut d'Astrophysique Spatiale (Orsay, Université Paris-Saclay) bridging physics and AI. I use and adapt tools from theoretical physics to analyse, understand and improve generalization in machine learning models. I also conceive, implement and train AI-based models to solve challenging problems in physics, especially in cosmology and disordered systems. Currently? I’m focusing on the theory side on how generalization emerges in diffusion-based models, and in practice on transforming them into powerful tools to study complex and high-dimensional physical systems. Feel free to contact me if you’d like to discuss AI theory, in particular generative models, or their applications in science and beyond.

- Generative Models

- High-dimensional landscapes

- Statistical physics

- Astrophysics and Cosmology

-

Ph.D. in Astrophysics & Cosmology, 2021

Université Paris-Saclay

-

Engineering school, 2017

CentraleSupélec

Experience

AI and physics: theory and applications.

AI for physics and physics for AI: development of AI-based tools for astrophysics and cosmology and exploration of the links between theoretical physics and AI for a better understanding of AI systems. Teaching duty under the data science program of PSL University.

Applying statistical physics models for the understanding of machine learning algorithms.

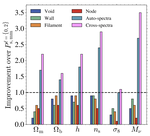

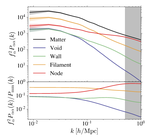

Cosmic web environments: identification, characterisation and quantification of cosmological information.

Conception and development of unsupervised algorithms to deinterleave radar pulses collected by satellites.

Publications

Contact

- bonnaire.tony@gmail.com

- 45 rue d'Ulm, Paris, 75005

- Staircase B, 3rd floor